AWS Debuts Amazon EC2 P6-B300 Instances Featuring NVIDIA Blackwell Ultra GPUs

Amazon Web Services has launched the Amazon EC2 P6-B300 instances, marking a significant upgrade in cloud infrastructure for training trillion-parameter foundation models. These new instances, powered by NVIDIA Blackwell Ultra GPUs, are now available in the US East (N. Virginia) and US West (Oregon) regions as of May 2026. The release targets enterprise decision-makers and AI researchers who require massive computational scale for the next generation of large language models.

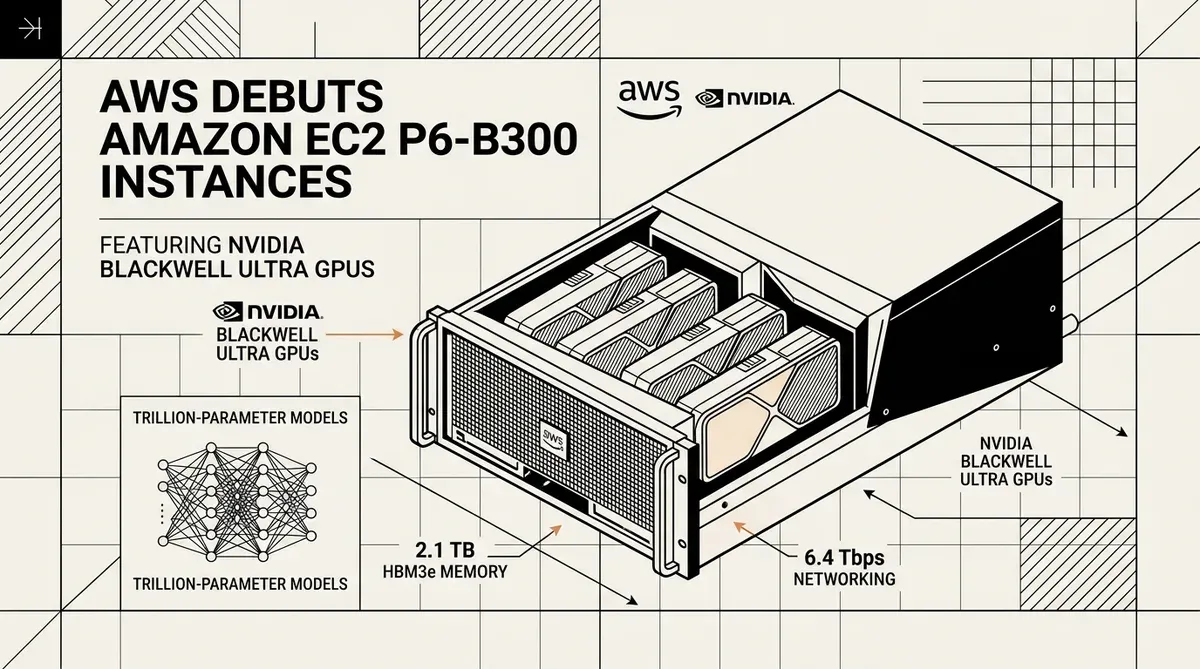

The Amazon EC2 P6-B300 platform integrates eight NVIDIA Blackwell Ultra GPUs per instance, providing a substantial leap in performance over previous generations. Each instance features 2.1 TB of high-bandwidth GPU memory (HBM3e), which is a 50% increase compared to the P6-B200 models. This memory expansion allows for larger model weights to be held locally on the GPU, reducing the need for frequent data swaps and accelerating the training of complex neural networks.

Technical Specifications and Networking Performance

Beyond raw GPU power, the Amazon EC2 P6-B300 instances introduce major improvements to data movement and system memory. Built on the AWS Nitro System, these instances offload I/O and security functions to dedicated hardware, ensuring that the primary compute resources remain focused on AI workloads. The system memory has been scaled to 4,096 GiB to support the data-intensive nature of modern AI pipelines.

Networking throughput sees a doubling in capacity through the implementation of EFAv4 (Elastic Fabric Adapter). The instances deliver 6.4 Tbps of networking bandwidth, alongside 300 Gbps of dedicated ENA (Elastic Network Adapter) throughput. This high-speed interconnect is essential for distributed training, where thousands of GPUs must communicate with minimal latency to synchronize model gradients. The integration of PCIe Gen6 further supports this throughput by doubling the internal data transfer rates between system components.

- 8x NVIDIA Blackwell Ultra GPUs per instance.

- 2,144 GB of HBM3e GPU memory.

- 6.4 Tbps EFAv4 networking bandwidth.

- 4,096 GiB of system memory.

- PCIe Gen6 integration for enhanced data transfer.

Strategic Implications for AI Development

The introduction of the Amazon EC2 P6-B300 instances reflects the intensifying race to provide the infrastructure necessary for "frontier" AI models. By offering 1.5x more GPU TFLOPS at FP4 precision than its predecessors, AWS is positioning itself as the primary destination for organizations developing trillion-parameter models. The transition to HBM3e memory and PCIe Gen6 connectivity addresses the primary bottlenecks in AI scaling: memory capacity and interconnect speed.

For CTOs and AI strategists, this launch provides a clear path for scaling operations without the immediate need for on-premises hardware investment. The availability of these instances in major AWS regions allows for immediate deployment of high-performance clusters. As the demand for multimodal AI and sophisticated reasoning models grows, the ability to access Blackwell Ultra silicon via the cloud is a key competitive advantage for firms looking to minimize time-to-market for new AI products. This flexibility is particularly useful for startups that need to burst compute capacity during final model training phases.

AWS confirmed that the p6-b300.48xlarge size is the primary configuration for these instances. Organizations can now begin provisioning these resources through the AWS Management Console or Command Line Interface to support their most demanding machine learning projects. The company has indicated that these instances are optimized for the latest Ultraserver architectures, which can aggregate up to 13,320 GB of GPU memory across a single cluster for massive parallel processing tasks.

While we strive for accuracy, bytevyte can make mistakes. Users are advised to verify all information independently. We accept no liability for errors or omissions.

AI-generated image.

Related Articles

- AWS Integrates NVIDIA Blackwell GPUs into SageMaker with New G7e Instances

- Amazon Bedrock Integrates OpenAI GPT OSS and NVIDIA Nemotron to Diversify Enterprise AI Options

- Intel and Google Cloud Boost AI Performance with Xeon 6 and Custom Silicon

✔Human Verified