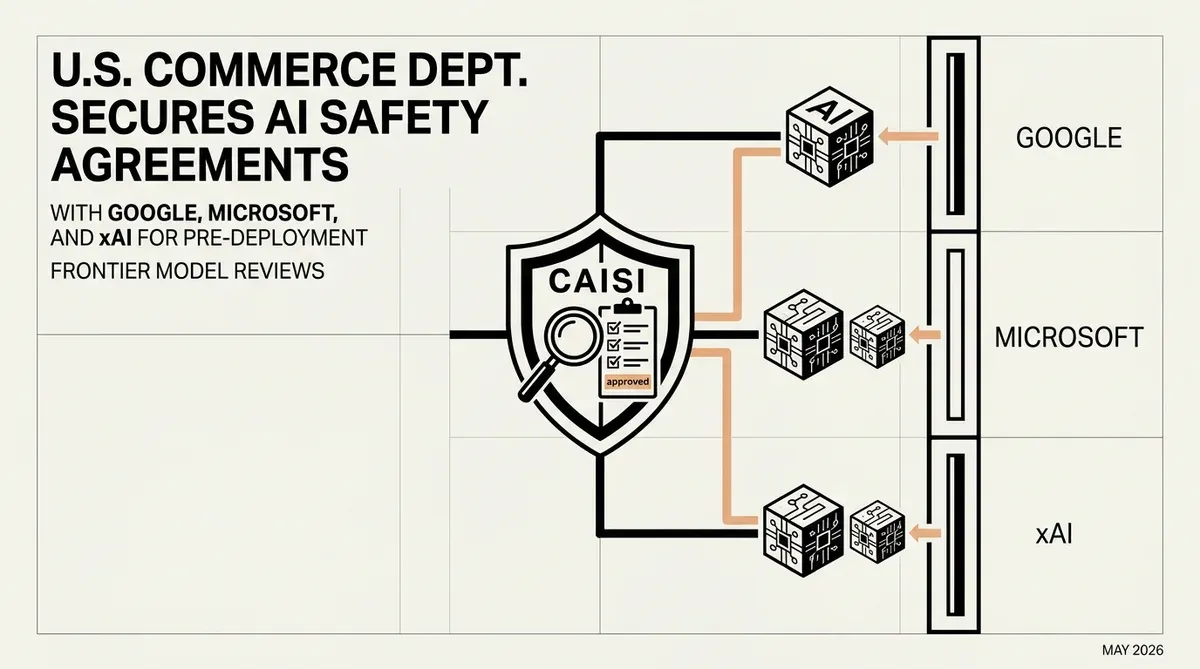

U.S. Commerce Department Secures AI Safety Agreements with Google, Microsoft, and xAI

The U.S. Department of Commerce has expanded its oversight of artificial intelligence by securing voluntary AI safety agreements with Google DeepMind, Microsoft, and xAI. Announced on May 5, 2026, these pacts grant the Center for AI Standards and Innovation (CAISI) early access to frontier models before their public release. This move allows federal experts to conduct rigorous national security reviews and risk-based assessments on the most advanced systems currently under development.

Under the terms of these agreements, CAISI will evaluate upcoming AI models for specific high-stakes vulnerabilities. The primary focus of these evaluations includes identifying risks related to cybersecurity, biosecurity, and the potential misuse of AI in developing chemical weapons. By formalizing these partnerships, the U.S. government aims to establish a standardized framework for testing the safety limits of generative technology before it reaches the commercial market.

Strategic Implications of New AI Safety Agreements

The inclusion of Google DeepMind, Microsoft, and xAI is a significant expansion of the U.S. AI safety initiative. These companies now join OpenAI and Anthropic, both of which had previously aligned with CAISI directives. For enterprise leaders and strategists, this unified front suggests that pre-deployment testing is becoming a standard requirement for any organization developing large-scale foundation models. The participation of xAI is particularly notable, as it indicates that even newer market entrants are being brought into the federal safety fold.

The transition of the organization from the U.S. AI Safety Institute to CAISI reflects a broader institutional commitment to long-term AI governance. This agency is tasked with creating the technical benchmarks that will likely define future regulatory compliance. For CTOs and founders, the immediate takeaway is that the barrier for "frontier" status now includes a mandatory period of federal scrutiny. This could impact development timelines, as companies must factor in the time required for CAISI to complete its national security assessments.

This collaborative approach seeks to balance innovation with public safety without resorting to immediate, rigid legislation. By relying on voluntary AI safety agreements, the Commerce Department maintains a flexible relationship with the private sector while gaining deep technical insight into the capabilities of next-generation models. As of May 2026, the focus remains on preventing catastrophic misuse, but the data gathered during these reviews will almost certainly inform the next phase of global AI policy and safety standards.

While we strive for accuracy, bytevyte can make mistakes. Users are advised to verify all information independently. We accept no liability for errors or omissions.

AI-generated image.

Related Articles

- US Defense Department Partners with Tech Giants to Establish AI-First Military Force

- Microsoft Launches MAI-Image-2-Efficient for lower AI Costs

- Stanford 2026 AI Index: Adoption Surges, Transparency Falls

✔Human Verified