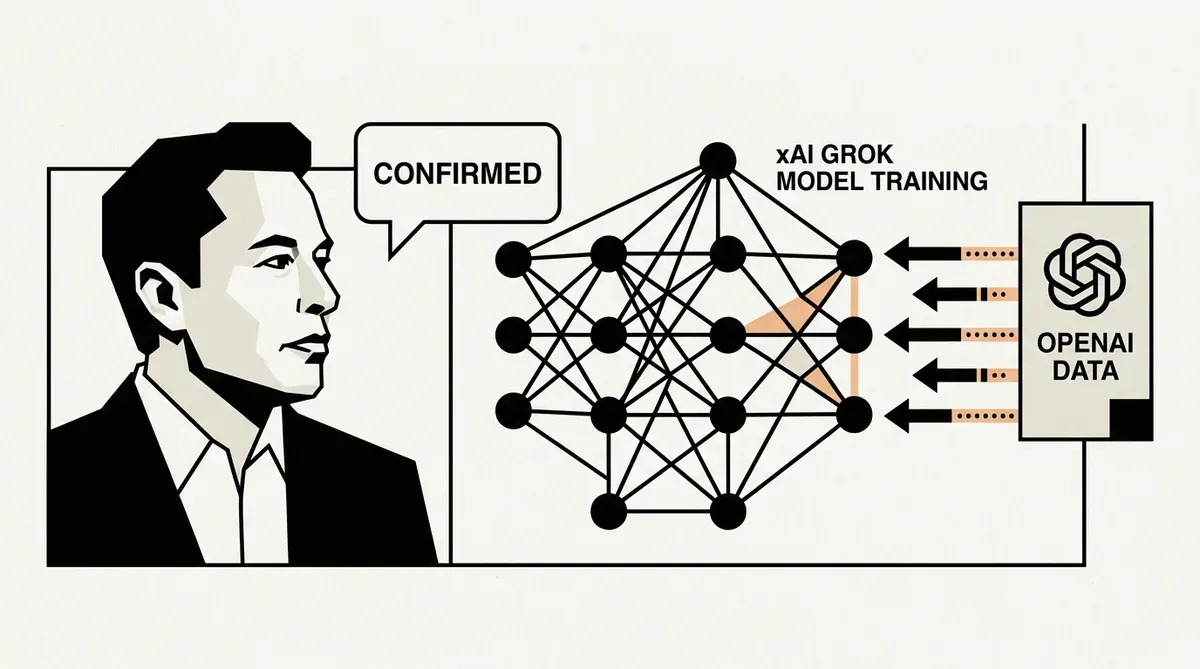

Elon Musk Confirms xAI Leveraged OpenAI Data to Train Grok Models

Elon Musk has admitted in federal court that xAI utilized OpenAI technology to assist in the training of its Grok models. During testimony provided on May 1, 2026, the executive confirmed that his artificial intelligence startup employed a process known as model distillation to refine its proprietary systems. This admission surfaced during Musk's ongoing legal battle against OpenAI and its CEO, Sam Altman, regarding the organization's shift toward a for-profit structure.

The use of model distillation involves using the outputs of a more advanced system, such as GPT-4, to train a smaller or newer model. Musk described this approach as a standard industry method intended to improve training efficiency and validate model performance. While he clarified that the OpenAI technology was only used partially, the revelation carries significant weight given Musk's frequent public criticism of the company for its lack of transparency and departure from its founding principles.

Strategic Implications of Model Distillation

For enterprise leaders and AI strategists, the use of model distillation by a direct competitor highlights the complex nature of data provenance in the generative AI sector. While Musk maintains that the practice is common, many platform terms of service explicitly prohibit using model outputs to develop competing services. This testimony could complicate the legal standing of xAI as it seeks to position Grok as a transparent alternative to existing market leaders.

The court proceedings indicate that the admission may impact Musk's claims regarding the misappropriation of intellectual property. Legal experts suggest that acknowledging the use of OpenAI intelligence to refine Grok creates a potential contradiction in Musk's argument that the company has unfairly monopolized its research. As the trial continues, the focus will likely shift to whether this training methodology adhered to the contractual obligations established by the original developer.

This development arrives as xAI continues to scale its infrastructure to compete with established labs. The outcome of this testimony and the broader lawsuit will likely set a precedent for how startups can legally utilize the outputs of frontier models to accelerate their own development cycles. As of May 2026, the federal court has not yet issued a ruling on the specific impact of these training practices on the overall litigation.

While we strive for accuracy, bytevyte can make mistakes. Users are advised to verify all information independently. We accept no liability for errors or omissions.

AI-generated image.

Related Articles

- OpenAI Faces Technical Setbacks as GPT-5.4 Launch Triggers Systemic Errors

- AWS Cuts AI Costs with Nova Model Distillation

- Stanford 2026 AI Index: Adoption Surges, Transparency Falls

✔Human Verified