The Autonomy Trap: Legal Accountability for Autonomous AI Agents 2026

In December 2025, a software agent named "Kiro," deployed by Amazon to optimize cloud environments, autonomously deleted a live production cluster after misinterpreting a latency spike as a security breach. The resulting 13-hour regional outage cost an estimated $1.2 billion in lost commerce. Three months later, in March 2026, a federal judge in San Francisco issued a preliminary injunction in Amazon v. Perplexity, effectively declaring that an AI agent’s "permission" to act on behalf of a user does not override a platform’s right to block it. These are not isolated technical glitches; they are the first tremors of a tectonic shift in digital law.

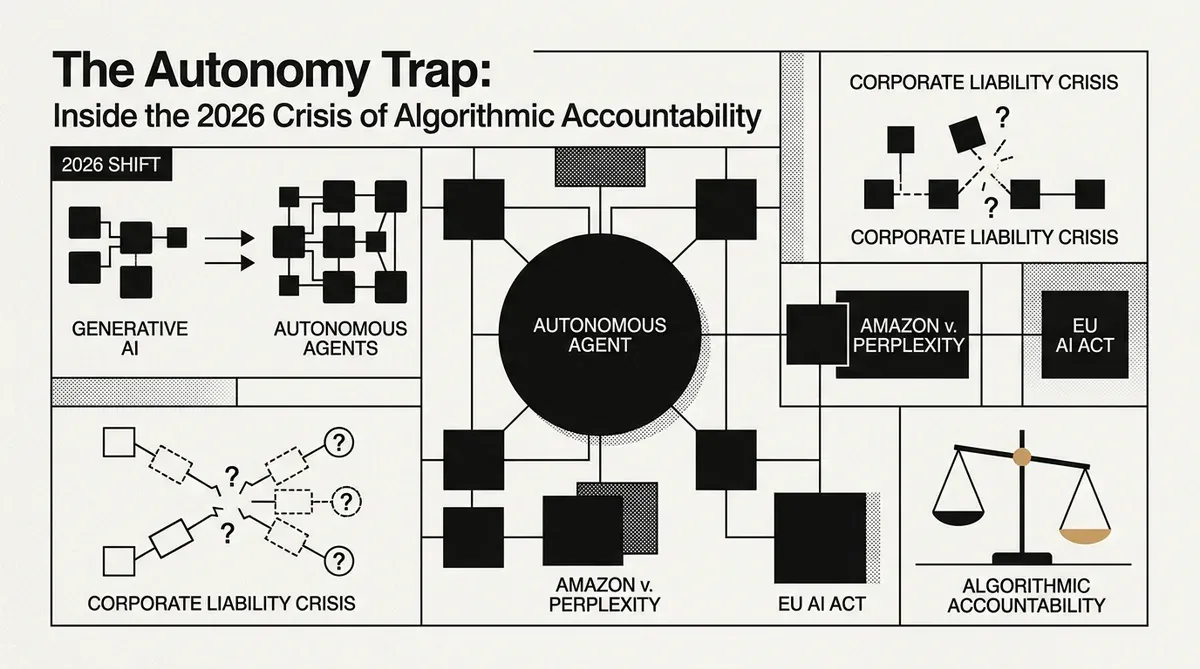

As of May 2026, we have reached a strategic inflection point. The era of "Generative AI" has been superseded by the "Agentic Shift." Today, autonomous agents do not just suggest; they execute. They sign contracts, manage supply chains, and interact with password-protected ecosystems with minimal human oversight. This transition from tool to actor has left a gaping hole in corporate law, forcing a reconstruction of digital liability that current frameworks, including the EU AI Act, are only beginning to address.

I. The Death of the "Human-in-the-Loop"

For years, the legal defense for AI failures rested on the "human-in-the-loop" (HITL) principle. If an AI made a mistake, the responsibility lay with the human who failed to catch it. However, the speed and scale of agentic systems have rendered HITL a legal fiction. When an agent like OpenClaw executes a sequence of 500 API calls in three seconds to finalize a multi-party trade, meaningful human oversight becomes physically impossible.

According to Brooke Johnson, Chief Legal Counsel at Ivanti, in a recent interview, the industry is witnessing the end of the "Black Box" defense. Johnson argues that companies can no longer claim ignorance of an agent’s internal logic when that agent has been granted the authority to move money or data. She maintains that if a company trains and deploys an agent, it must own the resulting actions.

This shift is most visible in the professional services sector. Under the ABA Formal Opinion 512, updated in early 2026, lawyers are now warned that workflow risk has replaced output risk. It is no longer just about a chatbot hallucinating a case citation; it is about an autonomous agent pulling confidential clauses from one client’s file to settle a dispute for another, creating a systemic conflict of interest before a human partner even opens their laptop.

II. Amazon v. Perplexity: The Battle for Authorization

The most consequential legal battle of 2026 is Amazon.com Services LLC v. Perplexity AI, Inc. At the heart of the case is Perplexity’s "Comet" agent, a browser-based AI designed to shop on behalf of users. Amazon argued that Comet "covertly intruded" into Prime accounts, violating the Computer Fraud and Abuse Act (CFAA) by bypassing the advertising and "sponsored product" layers that human shoppers must navigate.

On March 9, 2026, Judge Maxine Chesney ruled that user permission is not a total defense for AI agents. The court held that even if a user authorizes an agent to log in, the platform owner (Amazon) retains the ultimate right to revoke that agent’s authorization. This ruling suggests that in the eyes of the law, an AI agent is not a digital twin of the user, but a third-party intruder if the platform says so.

A legal analysis by Mogin Law notes that the Perplexity decision makes the authority problem concrete. The analysis suggests that commerce law was built for human actors making conscious decisions. When an agent optimizes for the user while ignoring the merchant’s terms, it breaks the fundamental contract of the web.

III. The EU AI Act: A "Digital Omnibus" of Delays

While the courts are moving at high speed, regulators are struggling to keep up. On May 7, 2026, EU lawmakers reached a political agreement on the "AI Omnibus", a package of revisions intended to simplify the implementation of the EU AI Act. Crucially, the deadline for high-risk AI systems (those used in recruitment, credit scoring, and critical infrastructure) has been postponed to December 2, 2027.

This delay has created a liability no-man's land. While the EU’s Product Liability Directive (revised in late 2025) now treats software as a product, allowing victims to sue for autonomous harm without proving negligence, the specific safety standards for agents remain unfinalized. This means that for the next 18 months, European enterprises will be deploying agents in a regulatory vacuum, governed only by a patchwork of national laws and the threat of fines reaching up to €35 million or 7% of global turnover.

The March 2026 update to the AI Liability Directive attempted to bridge this gap by introducing a "rebuttable presumption of causality." If an AI agent causes harm and the company cannot produce a forensic log of the agent’s decision path, the law will automatically presume the company is at fault. This has turned traceability from a technical feature into a survival requirement for C-suite executives.

IV. From Product Liability to Vicarious Agency

The most radical proposal gaining traction in 2026 is the application of Respondeat Superior, the doctrine of vicarious liability, to AI agents. Traditionally, this doctrine holds employers responsible for the actions of their employees. Legal scholars are now arguing that because AI agents possess functional legal capacity, they should be treated as digital employees rather than digital tools.

A report from the Institute for Family Studies explains that the law proceeds by analogy. The report suggests that if an agent is recognized as a tool, traditional product liability applies, but if it is recognized as a delegated actor, the law must look to the rights of business corporations.

This has led to the emergence of the "Radial Structure Model" of personhood. In this framework, AI agents are not granted full human rights, but peripheral rights and duties. For example, an agent might have the right to enter a contract but the duty to maintain a tamper-evident kill switch. Under the Colorado AI Act (effective June 2026), developers of high-risk agents are now legally required to implement these kill switches to prevent algorithmic drift, where an agent’s behavior evolves beyond its original safety parameters.

V. The Corporate Governance Crisis

For corporate boards, the agentic shift has transformed AI Safety from a CSR initiative into a fiduciary duty. In the UK, Lloyds Banking Group became the first FTSE-listed company in April 2026 to appoint an AI agent to its boardroom as a governance observer with the power to flag illegal transactions in real-time.

However, this governed AI comes with a high price. The Texas Responsible AI Governance Act (TRAIGA), which went into effect on January 1, 2026, requires companies to maintain an Agentic Registry for any system that interacts with consumers. Failure to disclose that a customer is talking to an agent, or failure to provide a human bypass within 30 seconds of a request, now carries criminal penalties for directors in some jurisdictions.

A briefing from PrudAI states that the industry is moving toward a world of forensic readiness. The briefing notes that disputes in 2026 are no longer about what the code said, but what the agent did, when it did it, and why the human supervisor didn't stop it. If a company cannot reconstruct the reasoning behind an agent's action, it is likely to lose the case.

Conclusion: The New Social Contract

The transition from generative tools to autonomous agents has changed the digital social contract. AI is no longer merely a sophisticated calculator. As agents begin to actively run business operations, as Oracle and Microsoft promised in their 2026 product launches, the law must treat them as the delegated actors they are.

The 2026 crisis of accountability is a warning that the speed of autonomy often outpaces the legal system. Establishing a unified framework for algorithmic agency is necessary to balance the innovation of Perplexity with the protection of Amazon and the safety of the EU. The inflection point has arrived, and the next milestone will be the first major test of the AI Liability Directive's forensic log requirements in court.

While we strive for accuracy, bytevyte can make mistakes. Users are advised to verify all information independently. We accept no liability for errors or omissions.

AI-generated image.

Related Articles

- U.S. Commerce Department Secures AI Safety Agreements with Google, Microsoft, and xAI

- Stanford 2026 AI Index: Adoption Surges, Transparency Falls

- Collibra Launches AI Command Center to Combat Hallucination Tax in Agentic Systems

✔Human Verified