NVIDIA Unveils Nemotron 3 Nano Omni to Streamline Multimodal AI Workflows

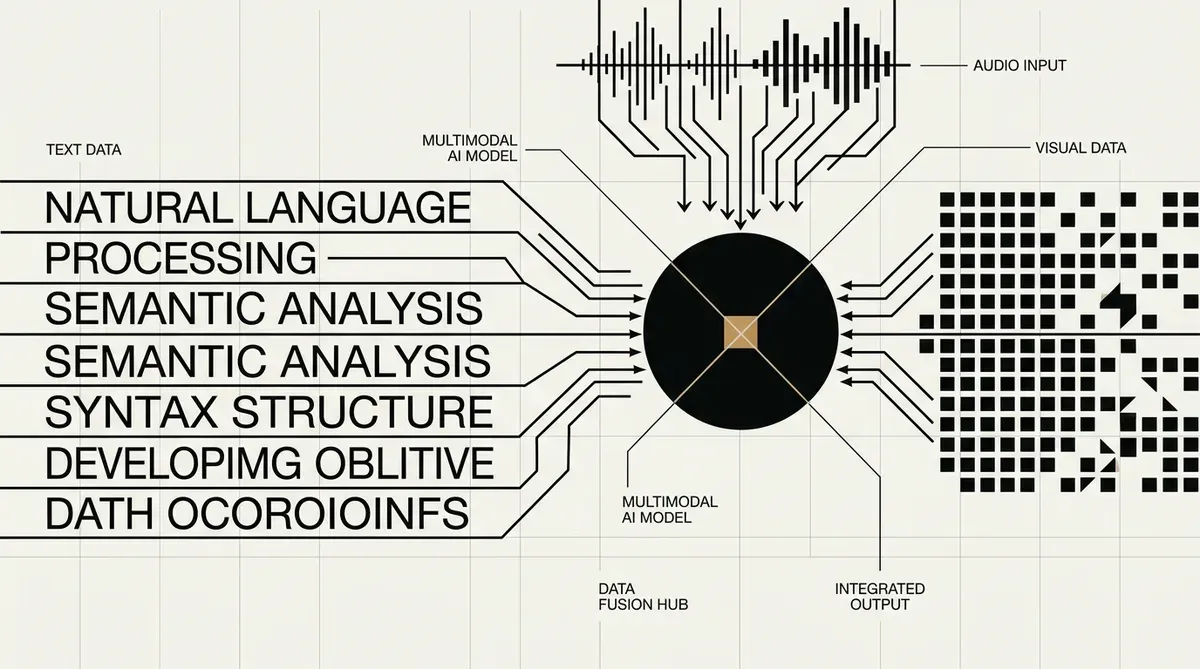

NVIDIA has launched the Nemotron 3 Nano Omni, a 30-billion parameter model designed to unify text, image, video, and audio processing into a single architecture. Released this week, the model utilizes a hybrid Mixture-of-Experts (MoE) design that maintains only 3 billion active parameters during inference. This approach allows the Nemotron 3 Nano Omni to deliver significant performance gains while reducing the computational overhead typically associated with managing separate models for different sensory inputs.

The architecture integrates Mamba layers to handle long-sequence data efficiently alongside standard Transformer layers for complex reasoning tasks. By consolidating vision and audio understanding, NVIDIA claims the system achieves up to 9x higher throughput compared to traditional fragmented AI stacks. The model is specifically optimized for agentic computer use, enabling AI assistants to navigate graphical user interfaces and parse intricate documents with higher precision.

Technical Specifications and Performance

The Nemotron 3 Nano Omni features a massive 256K token context window, allowing it to process extensive datasets or long-form video content. For video processing, the model employs Conv3D compression, while audio tasks are managed through the Parakeet-TDT framework. NVIDIA benchmarks indicate that the model leads in categories such as MMlongbench-Doc and WorldSense, highlighting its capability in document intelligence and spatial reasoning.

Efficiency remains a core focus of this release, with NVIDIA reporting a 4x improvement in compute efficiency. The model requires approximately 25 GB of RAM for operation and is available in multiple precision formats, including BF16, FP8, and the specialized NVFP4. These optimizations ensure that the Nemotron 3 Nano Omni can be deployed across various hardware configurations without sacrificing the speed required for real-time applications.

Strategic Implications for Enterprise AI

For CTOs and technology strategists, the shift toward unified multimodal models represents a move away from the complexity of maintaining discrete pipelines for different data types. The ability of the Nemotron 3 Nano Omni to handle diverse inputs within a single framework reduces integration friction and lowers the total cost of ownership for AI infrastructure. This consolidation is particularly relevant for firms developing autonomous agents that must interact with software environments designed for human users.

NVIDIA has made the model accessible through Hugging Face and its own NIM microservices, facilitating rapid deployment for enterprise developers. As of 2026-05-02, the release marks a significant step in NVIDIA's strategy to provide the foundational software layers necessary for the next generation of multimodal AI agents. Organizations focusing on document automation and GUI-based automation may find this unified architecture a critical component in their technical roadmap.

While we strive for accuracy, bytevyte can make mistakes. Users are advised to verify all information independently. We accept no liability for errors or omissions.

AI-generated image.

Related Articles

- Amazon Bedrock Integrates OpenAI GPT OSS and NVIDIA Nemotron to Diversify Enterprise AI Options

- NVIDIA Secures Multi-Billion Dollar Pentagon Contract to Deploy Nemotron AI on Classified Networks

- NVIDIA Launches NemoClaw Open-Source Stack to Secure Autonomous AI Agents

✔Human Verified