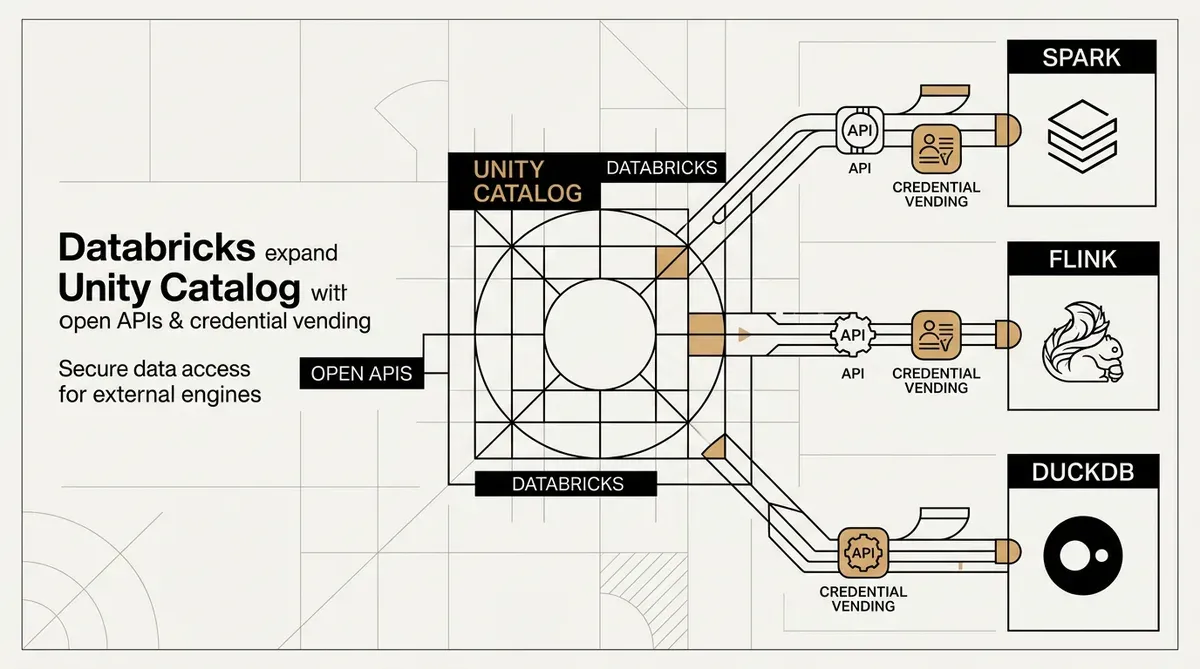

Databricks Enables External Engine Access to Unity Catalog via Open APIs

Databricks has increased the interoperability of its Unity Catalog by launching open APIs and making credential vending generally available. These updates allow third-party compute environments to interact with managed Delta tables. Tools such as Apache Spark, Flink, and DuckDB can now execute full data operations, including table creation and updates, directly within the catalog. The system uses a new open standard for catalog commits to maintain transactional integrity and support simultaneous writes across different platforms.

The update removes technical barriers associated with proprietary data governance systems. By opening Unity Catalog, Databricks allows companies to use a single governance layer while choosing specific processing engines for different tasks. Security for long-running pipelines is managed through machine-to-machine (M2M) OAuth and automated credential refreshing.

Technical Details of Credential Vending

Credential vending is now generally available for secure data access. This system issues temporary, limited-access tokens to external compute engines, which replaces the need for permanent static credentials. Databricks also launched a public preview of this feature for Volumes. This extension provides governed access to unstructured data types, including video and image files.

The use of open APIs in the Unity Catalog environment provides more flexibility for enterprise AI systems. As organizations build more advanced AI models, they require secure access to governed data across multiple engines. Databricks plans to add attribute-based access controls (ABAC) in later releases. These controls will allow administrators to set security policies at the row and column level for external data reads.

New performance features are also available. Predictive Optimization increases query speeds by up to 20 times and lowers storage expenses by 50 percent. These improvements are intended to reduce the total cost of managing large data sets while maintaining broad Unity Catalog access.

The expanded Unity Catalog framework integrates Delta tables into more diverse workflows. Databricks is using open standards for transactional commits to make its governance layer a standard connector for data stacks. Organizations can use DuckDB for local data tasks or Flink for streaming while keeping security and auditing centralized in Unity Catalog.

While we strive for accuracy, bytevyte can make mistakes. Users are advised to verify all information independently. We accept no liability for errors or omissions.

AI-generated image.

Related Articles

- Databricks Automates Data Integration with New Native Lakehouse Sync Feature

- Databricks Unity AI Gateway Adds Agentic AI Governance

- Databricks Launches Genie Agent Mode for Data Analysis

✔Human Verified